How the Internet Archive Decides What to Archive: Priorities, Frequency, and Data Sources

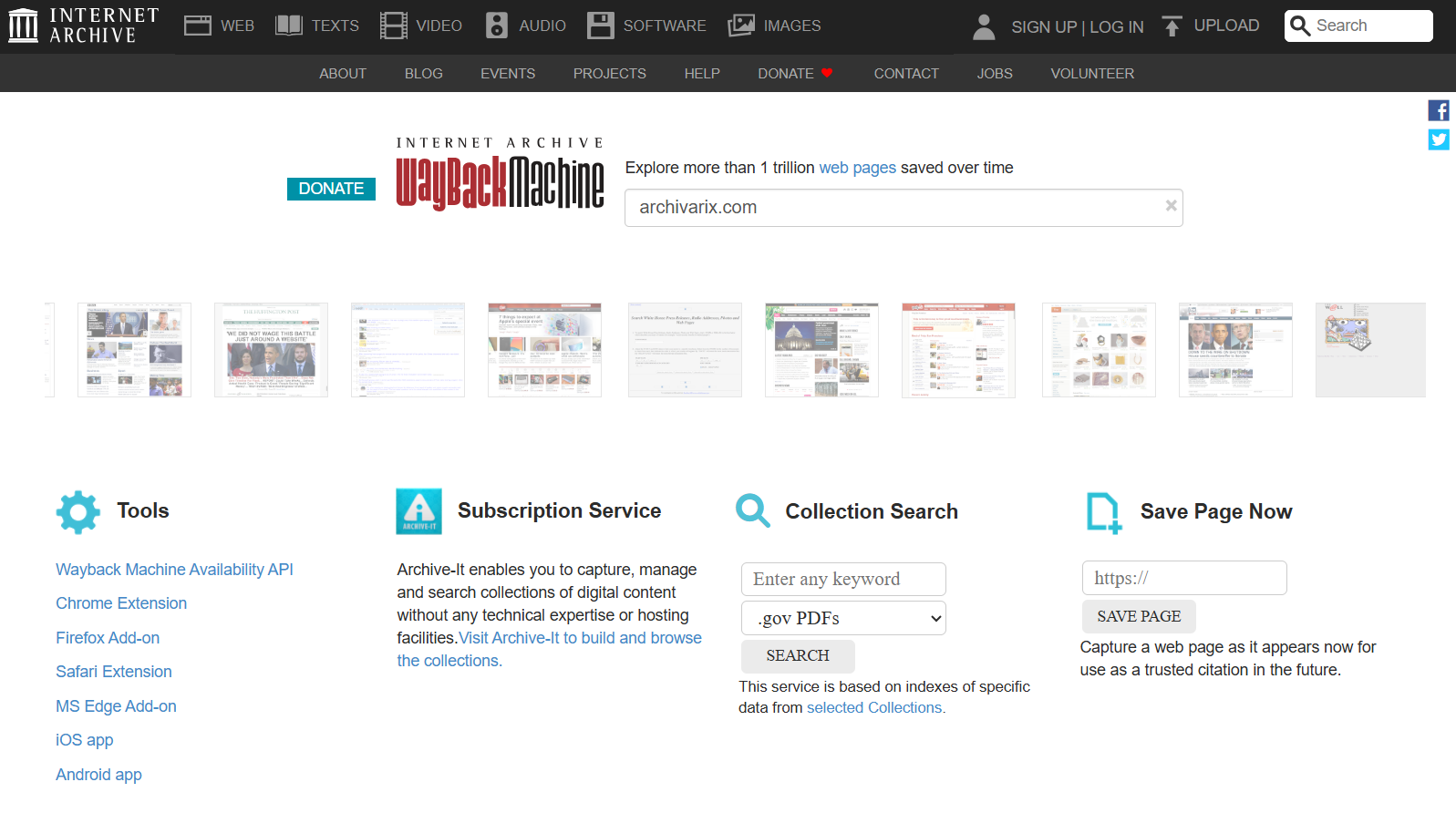

One trillion saved pages. Over 99 petabytes of data. Hundreds of crawls running simultaneously every day. Behind these numbers lies a question that everyone who professionally works with web archives asks themselves: how exactly does the Wayback Machine decide which sites to scan, how often to return to them, and why are some domains represented in the archive with thousands of snapshots while others have only a few records over ten years?

Understanding these mechanisms is critically important for anyone involved in website restoration. If you know how the system works from the inside, you can predict what you'll find in the archive and what won't be there. And you can influence the archiving of your own sites while they're still live.

Read more…

Understanding these mechanisms is critically important for anyone involved in website restoration. If you know how the system works from the inside, you can predict what you'll find in the archive and what won't be there. And you can influence the archiving of your own sites while they're still live.

Web Archive in 2026: What Has Changed and How It Affects Website Restoration

In October 2025, the Wayback Machine reached the milestone of one trillion archived web pages. Over 100,000 terabytes of data. This is a massive achievement for a nonprofit organization that has been operating since 1996. But behind this impressive number lies a difficult period that Internet Archive has gone through over the past year and a half. Cyberattacks, lawsuits, changes in access policies, and new challenges from AI companies - all of this directly affects those who use the web archive for website restoration.

Read more…

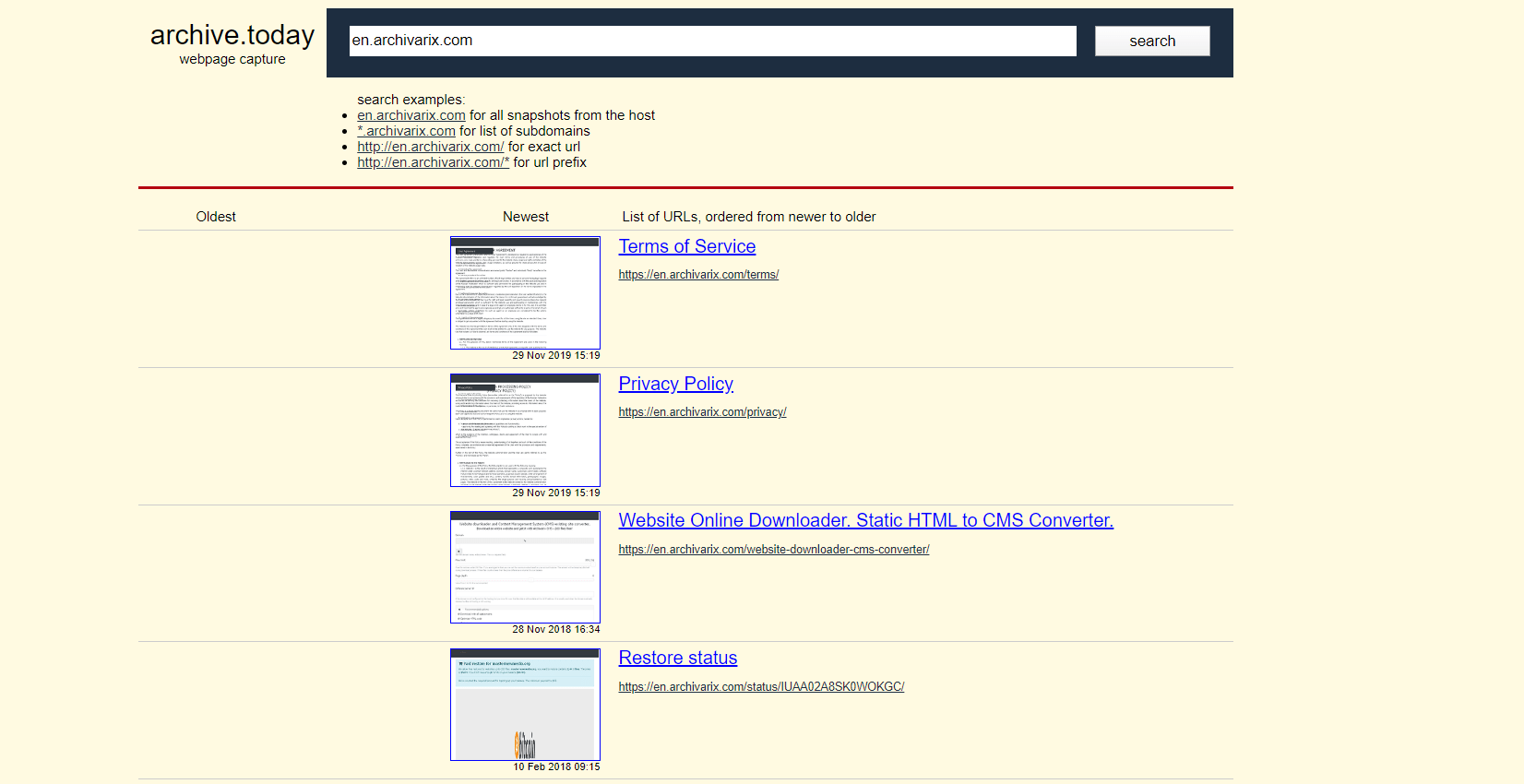

Best Wayback Machine alternatives. How to find deleted websites.

The Wayback Machine is the famous and biggest archive of websites in the world. It has more than 400 billion pages on their servers. Is there any archiving services like Archive.org?

Read more…